As part of my involvement with Preparing the Professoriate (PTP) I was able to engage in a small project with my advisor’s class, PY202, which is the second semester intro physics sequence for physics majors. Because of this, I focused my project on attempting to develop the students skills with advanced problem solving, as well as introduce them to powerful computational tools that can help them with their physics career.

Advanced Problem Solving

Summary

To engage the students in more challenging ways, I developed a series of in-class group projects that allow the students to work together to solve problems that are more complicated than students of their level would typically be asked to solve. These advanced problems are typically given to students at the end of every chapter, and are designed to utilize multiple concepts from current and previous chapters. Students are given a significant amount of class time to discuss the problems with other group members, interact with myself or their primary instructor, Dr. Fröhlich, and develop a solution.

After students have turned in their solutions, I lead the class in a discussion of a solution having individual groups offer up their own ideas and insights to the rest of the class. Once a solution has been developed, the groups then take time to reflect on the statement and think about the concepts that were important and what they learned during the group work and/or subsequent discussion.

The grading of the problem was done to emphasize the approach to solving the problem, rather than completion with a correct solution. Points were awarded for writing down relevant concepts and equations, and using them in correct ways. This was done to give students more freedom in trying different approaches, new ideas to solve their problem, without the stress of not being able to finish these difficult problems.

Link to In-class problems & solutions given to students

In-Class Problem Reflection

Following the completion of each in-class exercise, students would be given a few minutes to reflect upon the problem and evaluate the key concepts that were important to solving the question, as well as what they, as a group, learned or gained a better understanding of as a result of the problem.

From early on, students had a good eye for picking out the core concepts, even if they struggled to implement them to their fullest extent as the problem might require. By the end of the semester, students seemed much quicker to determine what concepts were being tested and were able to better develop and implement a strategy for solving them.

Above are two word clouds generated from the students reflective statements. A full list of all reflection statements submitted for each problem can be found here.

Overall Student Feedback

Towards the end of the project we gave the students an anonymous online survey to gather their evaluation of the project. The survey gauged the student perception and engagement with the problems, as well as self reporting on their improvement during the semester.

The survey showed that most of the class were active participants in the exercises with over 80% of the class reporting being an active part of their groups more than half of the time. Students also seemed to enjoy the problems with three-quarters of students indicating they liked these exercises to some degree. The remainder of students were split evenly between neutral and some degree of dislike for the problems.

The main goal of this project was to develop the students physics intuition and advance their problem solving skills, and the students indicated that we succeeded in doing that. For both improvement of physics knowledge and improvement of problem solving skills, student responses indicate that, on average, the problems were slightly useful. The break down of these responses can be seen in the two figures in this section.

Class Scores

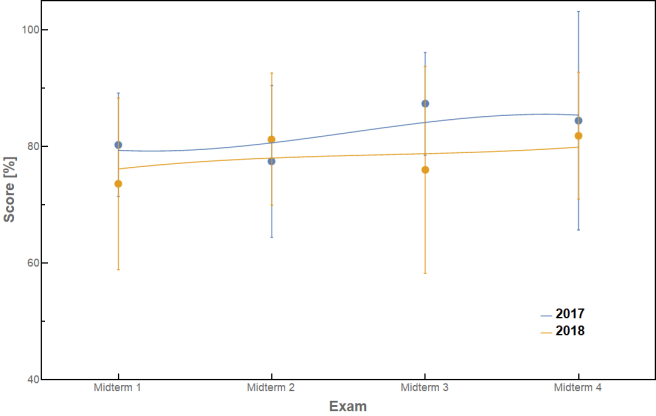

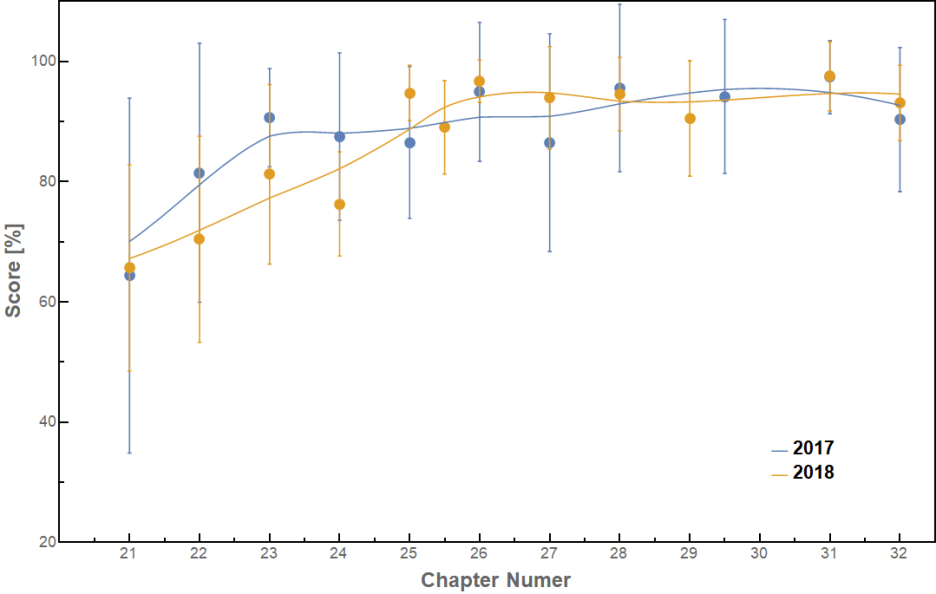

In addition to student self-reporting, we wished to evaluate the student’s performance throughout the semester to gauge the effects of the In-Class Assignments. As my advisor had taught this course the previous year, we had the ability to compare the two groups for differences in performance. Both years had four exams spaced throughout the year in addition to a comprehensive Final Exam and would have around a dozen homework assignments over the semester. They had the same instructor, book, and syllabus. The one difference of note was that a couple of problems on each assignment for the 2017 class were designed to be submitted on the WebAssign system. For the 2018 class these problems were tweaked to be in line with the rest of the written assignment.

Looking first at comparing the exams between the two years, we can see in the figure above, that the two years exam scores are not statistically different. With the exception of Midterm 3, all four of the Midterm exams have average scores within a few points of the other year. This would initially indicate that the In-Class problems have had no impact on the student exam performance.

Looking at Homework scores we see a similar scenario. The First homework assignment averages are almost identical and the two homework populations remain statistically indistinguishable as they rise. By the fifth assignment (Chapter 25) scores have begun to hit the 100% grade ceiling making it impossible for the populations to become statistically distinct unless one of them significantly decreases. Unlike with exams however, the size of the error bars appears to be much smaller for the 2018 year.

In the figure above we have plotted the Dispersion of each assignment between the two years and indeed we see that the dispersion for this year has fallen into the 5%-10% range, significantly lower than the 10%-15% range that the 2017 year had.

This indicates that while the exam and homework averages are remaining statistically unchanged at their design values (75%-80% for exams and 80%-90% on homework), the dispersion on the homework is decreasing. I will note however that the dispersion on Exam scores remained roughly consistent at 10%-15% for both classes across all exams.

Conclusions

By multiple metrics it would appear that the In-Class Exercises were successful by some measure. Students were able to actively assess the core concepts and ideas being asked of them more readily as the semester went on. On average, students believed that these exercises aided their understanding of the physics material and/or their ability to solve complex physics problems put before them. Finally, while the average scores were statistically unchanged for both exams and homeworks, the standard deviation of the students homework scores dropped significantly for the 2018 year where the In-Class exercises were used.

This shrinking of the dispersion of grades could be a result of the exercises better illustrating the methods to the kinds of problem solving skills that physics often demands in a more cooperative and interactive setting. It could also be a result of the group component where students that don’t understand the material are forced to confront that confusion and are given extra opportunities to ask their peers as well as instructors clarification.

This group component is the one area that I think needs the most amount of work to improve. While students overwhelmingly preferred the groups (65% vs just 7% who would have preferred individual), a common critique among students was a lack of communication and explanation of methods within their groups. It would be worthwhile to pursue a method for encouraging greater group discussion and participation.

One such method would be to allow the group to solve the problem, but have each student individually write a solution. This would force students to petition their peers for the understanding they don’t have, but would force either a much greater time commitment to keep this in-class, or turn this into out-of class assignments which will remove the convenience of the assignment and access to the instructor.

A second possibility would be to increase the problem difficulty even more such that no student could complete it on their own. Student feedback however indicated that only around 3% of students thought the problems were easy. The rest of the students all perceived the problems to be some level of difficult with almost 72% indicating that it was a problem they couldn’t do alone. Thus making the problems harder will likely only make students with a lower understanding incapable of contributing to the problem at all.

Computational Tools

Summary

As part of the PTP project I have also developed a number of assignments related to teaching students the skills, and usefulness of using a computational tool like Mathematica in their physics studies. Students are assigned additional problem sets specifically designed to be done computationally. These assignments focus on leaning the different ways that computational resources can simplify physics such as by solving complex problems, making plots, and simplifying mathematics. Each assignment also spans a few chapters and problems are designed to help emphasis some key points from the class as well, such as the empirical nature of Ohms Law.

Availability

In order to ensure that all students would be able to complete the Mathematica assignments, we took measures to ensure that they would have access to the software. Before the semester began we had a computer with Mathematica installed added to the undergraduate SPS room, as well as ensuring that the public computers located in the Astro Graduate Student suite where my office is located were installed with Mathematica. This was done to ensure that any student without a computer of their own would have access to the software in the physics building where they could seek assistance if needed.

We also took class time to walk the students through the process of applying for, downloading, and installing Mathematica on their own personal computers using the free license the Physics department provides. Feedback from students at the end of the semester indicates that virtually all of them completed the assignments using their own personal laptops.

Student Receptiveness & Implementation Issues

Unlike the In-Class assignments which students generally favored, from the beginning there were issues with the Mathematica Assignments. Among these was an initially unbalanced work load for students, a misaligned idea for how much programming background was necessary, and a miscommunication with students over academic standards on the assignments.

Initially the Mathematica assignments were designed to be comparable in length to a standard homework assignment, but due every 3-4 weeks instead of weekly. This was done to give students more time on the assignment, as well as allow each assignment to utilize a variety of programming skills multiple times. By the end of the first month however, it became clear that this was putting too much strain on the student’s work load. Students weren’t working on the assignments slowly over the whole month, instead preferring to try doing it all in the last week before it was due. It’s length combined with other assignments over that time period made the work load too much.

As a result the assignments were shortened significantly, cutting the number of problems in half or a third. This reduced the work load to accommodate the study patterns students were actually using instead of the pattern we had aspired for them to use, as well as providing them with plenty of time to figure out these problems.

Figuring out the problems, specifically the coding side of it, proved to be a challenge for students throughout the semester. While the Mathematica language was chose because of its more user-friendly form and extensive documentation to guide new users, students still struggled to fully understand the process of translating the physics into the code. We had hoped that they could use the Wolfram Documentation Center to pick up the syntax and methods of the language; however, the Documentation center and online sources accounted for just under 50% of the resources used to complete the assignments. Many of them required additional instruction and assistance in this regard which was not initially planned for in class-time. As a result, in addition to office hours, I began hosting additional office hours style sessions on Saturday’s for students to come in and receive some additional instruction and guidance.

Additionally, during the early assignments it became very clear that we were having an issue with students turning in identical codes. We clarified to the students that working on the project together was allowed; however, write ups of the code should be done individually, and clarified that sharing code was a violation of academic integrity. We chose not to address any individual instances because a) the problem was very widespread, and b) we feared that this was more an issue with our lack of definition and clarification.

After a second round of assignments showed additional evidence of copied codes we had discussions with certain students about apparent similarities, as well as having the Office of Student Conduct explain to students what the consequences of being reported for academic integrity violations were. Because of the various other complications, discussed above, we chose not to make any reports after this assignment, but were very clear that any further instances would have to be reported. Fortunately, after that assignment there were not any clear signs of copying or code sharing among student submissions.

Overall students did not like the Mathematica assignments with just under 75% of students indicating they disliked them to varying degrees. This compares to just over 20% that indicated they did like them a little. This is a result of much of the issues with the initial implementation of this project and lead to a participation rate of only around 77% for all assignments.

Mathematica Results

In the feedback that students provided on the Mathematica assignments, one of the first things that was indicated, beyond their dislike for the project, was the perceived difficulty of the problems.

The goal of the assignments were to focus on using computational tools to do problems that were too mathematically difficult or time consuming to do by hand. As a result the physics that went into each problem was meant to be relatively simple, just applied in non-ideal situations where the math didn’t always work out nicely. Despite this, almost 80% of students indicated that the individual Mathematica problems were difficult, with most of them (62%) indicating they were as hard as the In-Class problems we had assigned. The assignments as a whole were rated as being difficult by over 90% of the class. Based on my own interactions with the students during office hours and the Saturday sessions, there is a perceived increase in the difficulty of the physics of a problem, simply by introducing a coding requirement to it. On multiple occasions students would note the difficulty (or impossibility) of a problem, and only after we would go over the problem to gain a physical intuition for the situation, separating the physics, from the coding, would they recognize that these problems were actually very easy.

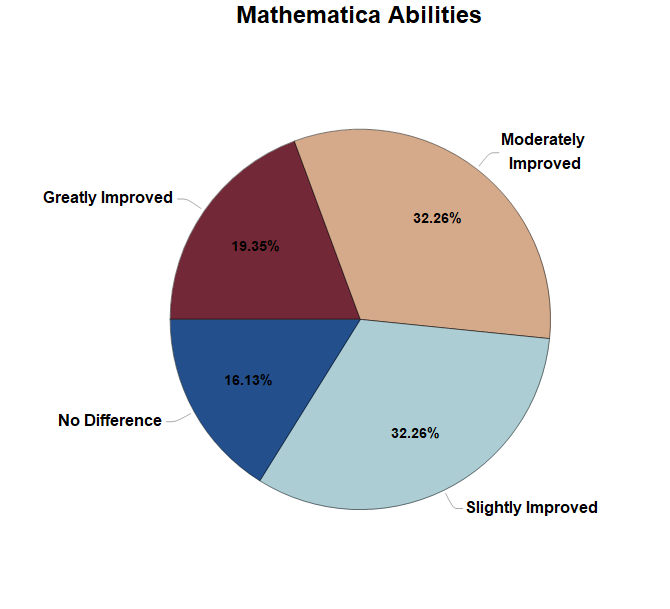

When asked about the Mathematica assignment’s contribution to their physics knowledge or problem solving, the results were much more mixed than with the In-Class problems as can be seen above. In both cases the majority of the class found the assignments to be some degree of useless in aiding them in this regard, but a small subset of students (15%-20%) found them useful in improving one or both skills. Despite this apparent lack of usefulness in their current academic career, only a minority of students polled believed that knowing Mathematica would be useless to them as they continue their academic careers and beyond.

Finally, students were asked to gauge their own improvement and familiarity with the language. The results showed that the majority of students believed themselves to have gotten better with the language to some degree as shown on the plot below. Generally the student scores agree with this. After accounting for students who stopped participating in the assignments the average scores have slightly increased through the semester.

Conclusions & Outlook

Despite all of the problems with the initial implementation of this project, the outcomes were still generally favorable. The students were able to improve their skills with a powerful and useful tool for doing physics, and most of them recognize that having this skill is something that they will benefit from in their future.

The primary issue with this project comes from the lack of guidance and instruction in the use of Mathematica, relying on students to be able to pick up the language. While this might be a more attainable goal for students further along in their academic careers, it is not something that new students should be expected to do.

The other side of this is that for any course that already exists, there isn’t class time to spare for the instruction of coding practices. Thus the implementation of this project in any future classes will need to find a way to provide more guidance and instruction in the execution of the assignment itself.

This project has been assessed by various faculty members who wish to see it implemented again in the future and expanded to cover the two semester sequence of introductory physics I and II. I will thus be working with the instructors for both of these courses, as well as instructors for programming specific classes already in the curriculum to make improvements to this project for the future semesters.